A look back at Insomni’hack 2025

Insomni’hack is a cybersecurity conference that’s been running since 2008, organized by SCRT S.A. (now Orange Cyberdefense Switzerland). It takes place in Switzerland and usually lasts a full week.

Each edition follows a pretty packed schedule: from Monday to Wednesday, you get a series of intensive workshops, and then Thursday and Friday are reserved for expert talks on all things security. As per the tradition, the highly anticipated on-site Capture the Flag (CTF) competition closes the event.

This year, it happened from March 10th to 14th at the SwissTech Convention Center in Lausanne.

In this post, I’ll share some of the talks I attended, why I found them interesting, and a few key takeaways I think are worth passing along.

1️⃣ Advanced Android Archaeology:

Baffled By Bloated Complexity

Speaker: Mathias Payer

TL;DR

This talk explored Android security vulnerabilities across multiple layers, from Trusted Applications (TAs) to user-space protections and supply chain risks. The presenter started with rollback attacks on TAs, explaining how the lack of proper safeguards allows attackers to revert to older, vulnerable versions.

They then shared their work on TA security assessment, which included building “el3xir,” a fuzzing platform, along with a Ghidra plugin for vulnerability analysis. This led to the discovery of 850 vulnerable apps.

Next, the focus shifted to user-space security and Google’s SCUDO memory allocator, where issues were found due to early randomization seed initialization. Supply chain risks were also discussed. Many Android apps rely on outdated shared libraries, and a large chunk of native code is reused from just 5,000 binaries. When developers fail to update these libraries, it leaves apps exposed.

The key takeaway is that Android security is making progress, but there are still major weak points. Trusted Applications, memory protection, and the software supply chain all need more attention to improve the ecosystem as a whole.

Key points & Technical content

Rollback attacks

When a trustlet is being loaded, its cryptographic digital signature will be validated. Usually this signature is computed using a private key to encrypt the hash of the trustlet (or certain parts of the trustlet).

Only the correct public key can decrypt it, and then the trustlet can be verified. For security purposes, the key pairs are based on the chain of trust, originating from the hardware. Ideally, if the system is updated, the older software like trustlets cannot be loaded into the newer system.

However, the trustlets can be replaced with their corresponding older versions. If the older version has a vulnerability and the newer version is patched, the attacker can still exploit the patched version by replacing it with an older one. We call it downgrade attack or rollback attack.

While rollback attacks demonstrate how outdated trustlets can reintroduce exploitable vulnerabilities, they also underscore the importance of thoroughly testing the security boundaries of TAs (particularly their Secure Monitor Calls) through methods such as fuzzing.

Fuzzing Trusted Applications & Secure Monitor Calls (SMCs)

One way to find vulnerabilities in TAs is to fuzz them, specifically SMCs. SMC stands for Secure Monitor Call. It’s a special instruction in ARM architecture that allows software running in the Normal World (REE) or Secure World (TEE) to switch execution between those two worlds.

It is the means for asking the Secure World to do something privileged, request a service (e.g., decrypt data, use a key, verify integrity) or initiate a world switch.

For instance, a TA can implement a service for decrypting data. If it wants it to be triggered by the external world, it has to implement a function/interface that can be triggered upon the reception of an SMC call that requests the execution of that specific function. Provided that the right arguments are passed via CPU registers, the function can do its job and return back its result via CPU registers.

Finding vulnerabilities in the TA by fuzzing the SMC then consists of targeting the SMC call interface, which typically includes:

- Function IDs (which specify the service you’re calling)

- Arguments (passed in registers like r0-r7 in ARM)

- Data pointers (sometimes you pass references to buffers in shared memory)

The fuzzer will:

- Generate random or crafted values for function IDs and arguments.

- Issue SMC calls with these values.

- Observe behavior: Watch for crashes, hangs, unexpected return codes, or error messages.

- Record inputs that lead to interesting behavior for deeper analysis.

EL3XIR

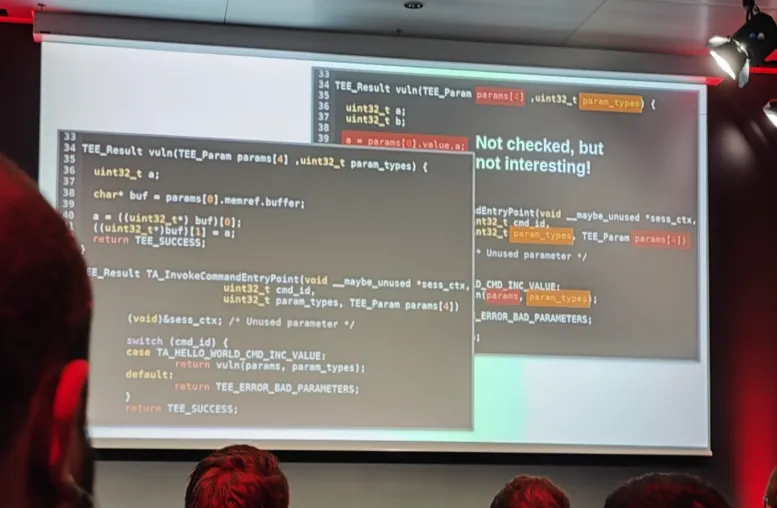

The researchers developed a fuzzing platform called el3xir to test the entry points of TAs, particularly focusing on the TA_InvokeCommandEntryPoint.

- Developers often fail to properly validate parameters passed to these commands. For example, attackers could pass manipulated memory references (memref), giving them unintended read/write access through improper size and buffer checks.

- A Ghidra plugin was developed by the team to automate the process of verifying whether TAs properly check their input parameters.

Their large-scale analysis revealed:

- 6900 TAs were analyzed for compliance with GlobalPlatform (GP) standards.

- 850 of these were found to have vulnerabilities, including improper parameter validation.

User-Space Protections: SCUDO Memory Allocator

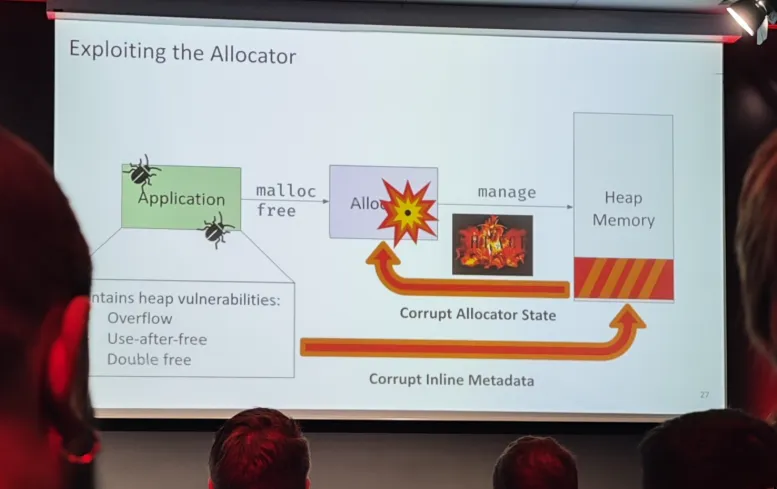

The focus then shifted to user-space security. Google’s SCUDO allocator was examined for its effectiveness against heap-based attacks.

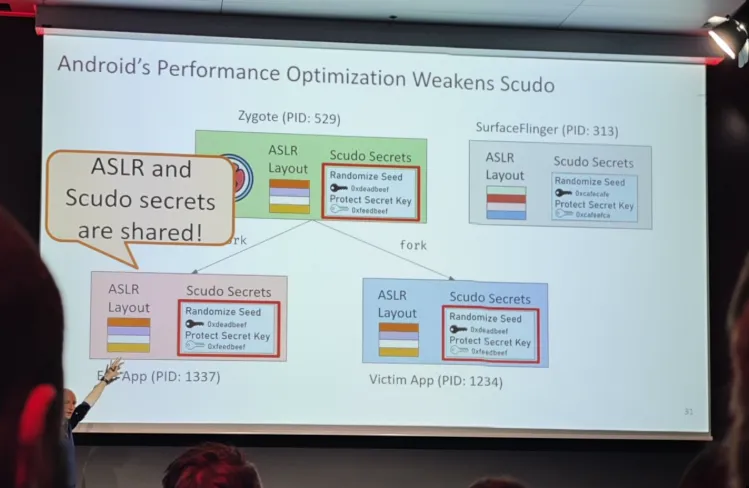

SCUDO employs randomization seeds and metadata signing to prevent certain types of memory corruption, such as overflows. However, the randomization seed is initialized early (during the zygote process), which may reduce its effectiveness over time.

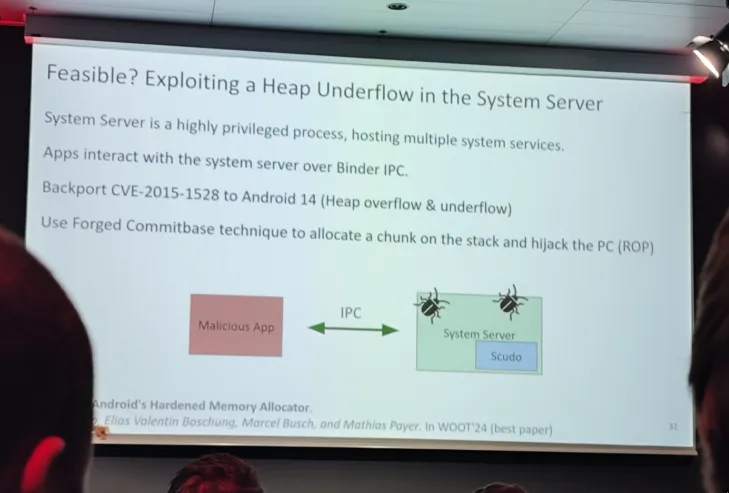

The researchers demonstrated a heap underflow exploit targeting the system server process, highlighting ongoing concerns about user-space security on Android.

While improvements in user-space protections, such as the SCUDO allocator, aim to mitigate memory-related attacks, broader supply chain concerns remain. The speaker then explained their work regarding the examination of how third-party libraries, that are often outdated or vulnerable, can undermine these security advances and expose apps to known threats.

Supply Chain Security and Shared Libraries in Android Apps

Their initial assumption was that many developers rely on outdated or vulnerable third-party libraries, they wanted to conduct an ecosystem-wide study of native libraries used by Android apps to assess supply chain risks: In their findings, they found out that 5,000 binaries accounted for 77.4% of all native libraries across apps, indicating a high degree of code sharing and potential exposure.

They used machine learning models, such as asm2vec and jTrans, to identify binary similarities. However, it was mentioned that these models struggled to scale effectively to large datasets.

Specific vulnerabilities were found in popular apps like WhatsApp (e.g., double free and memory allocation issues in the Droidsonroids library).

Their research emphasized a worrying trend: many developers fail to update libraries, leaving apps exposed to known CVEs.

2️⃣ You can’t touch this:

Secure Enclaves for Offensive Operations

Speakers: Matteo Malvica & Cedric Van Bockhaven

TL;DR

They showed how Microsoft’s VBS enclaves can be used offensively, either by creating your own enclave or abusing existing signed enclave DLLs. Although enclaves do offer stealth advantages (e.g., hiding data/code from normal user-space tools), limitations like minimal system calls and no easy RWX memory inside enclaves still make them challenging to fully weaponize for real‐world attacks

Key points & Technical content

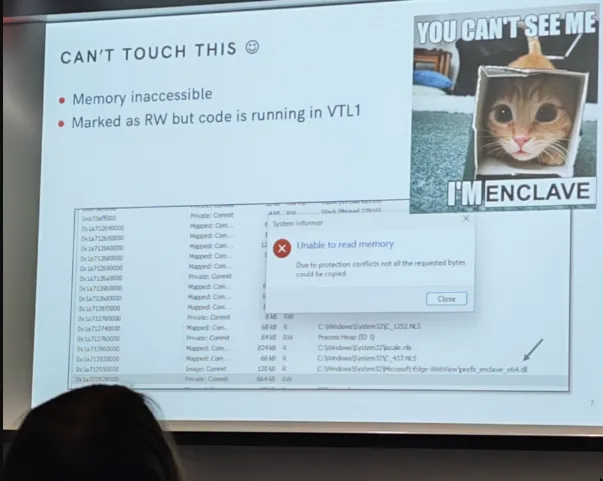

This presentation explained how Windows Virtualization-Based Security (VBS) enclaves provide an isolated “secure world” within the operating system and examined ways attackers might leverage them offensively.

The speakers first covered the basic architecture: VBS enclaves run in a higher trust level (VTL1) than normal user space (VTL0), effectively protecting their contents from standard process inspection.

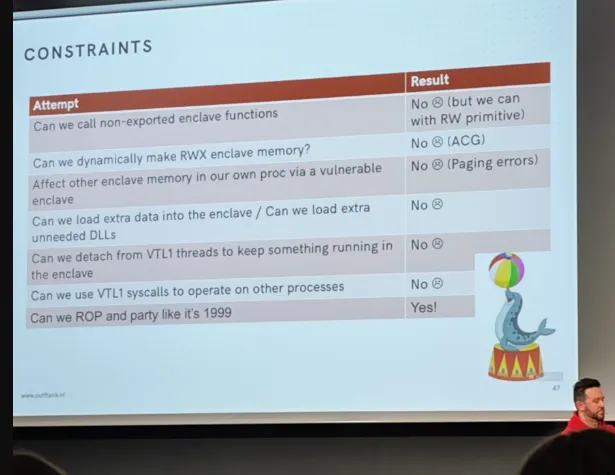

They then demonstrated two main approaches to working with enclaves:

Building an enclave from scratch

Showing how one can write and sign a custom enclave DLL, load it via CreateEnclave(), and use builtin enclave APIs (like SealSettings/UnsealSettings) to encrypt and decrypt data in a protected memory region.

Abusing existing Microsoft‐signed enclave DLLs

Since creating a custom enclave requires special signing steps (e.g., “Azure Trust Signing”) and can disclose developer certificates, the speakers explored pre‐installed enclaves such as:

- prefs_enclave_x64.dll (used by Microsoft Edge)

- SFAPE.dll (for enhanced phishing protection)d

By reverse engineering and testing these DLLs, they found ways to seal/unseal data or gain read/write capabilities, potentially letting an attacker place or protect malicious implants in a region invisible to normal user‐mode inspection.

They walked through how enclaves map and load into memory (with VTL0 controlling where images end up, but VTL1 protecting the enclave’s internals). They discussed sleep masking: using an enclave so a “beacon” (or malicious thread) can remain hidden and protected while “sleeping.”

They also tackled various limitations such as the difficulty of debugging enclaves, the inability to dynamically allocate executable memory inside them, and the minimal set of system calls available at VTL1.

Ultimately, while enclaves do grant some powerful stealth benefits (e.g., hiding code or data from typical user‐mode tools and certain EDR/AV hooks), there are still significant constraints.

In the end, the speakers concluded that although it is possible to seal an implant in an enclave (“making it untouchable”) and even chain read/write primitives into code execution under certain conditions, fully weaponizing VBS enclaves remains challenging.

They foresee that future enclave DLLs or newly discovered vulnerabilities in existing ones, might make enclaves a more potent offensive tool. But for now enclaves offer only partial stealth or obfuscation advantages.

3️⃣ Demystifying Automated City Shuttles:

Blessing or Cybersecurity Nightmare?

Speaker: Anastasija Collen

TL;DR

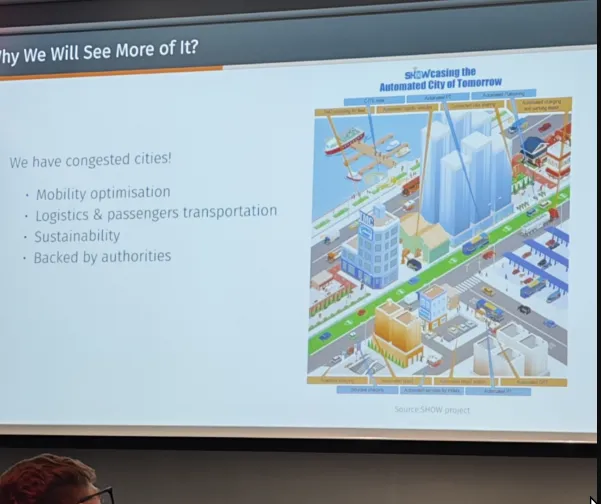

This presentation, delivered by Anastasija Collen and drawing on research from the I-Sec team at the University of Geneva (UNIGE), examined the promise of Automated City Shuttles (ACS) alongside the privacy and security risks they pose.

The presentation underlined that Automated City Shuttles can significantly improve urban transportation but must be deployed responsibly. Robust security practices, clear regulatory frameworks, and thoughtful privacy protections are vital to ensure that ACS truly benefit cities without undermining public trust.

The speaker talked about 2 main topics:

The challenges:

- Security and Privacy Risks: ACS have inherent vulnerabilities in their sensor and communication systems, and companies often have broad, unchecked access to user data.

- Slow Regulation: Legal frameworks struggle to keep up with rapid innovation, leaving gaps for exploitation or privacy infringement.

- Complex Ecosystem: With many stakeholders exchanging data, accountability and data governance are difficult to define.

The opportunities:

- Growing Market: ACS technology is expanding, offering new possibilities for mobility, sustainability, and efficiency.

- Cybersecurity Niche: Rising demands for secure and trustworthy automated vehicles create a robust field for security professionals.

- Strategic Technology: ACS could reshape urban infrastructure if implemented with balanced regulation and user trust in mind.

Key points & Technical content

This presentation is quite engaging because it tackles a hot topic, namely self-driving city shuttles. It lays out both their disruptive potential and the very real security and privacy concerns that can’t be ignored.

By giving open questions around liability and data protection, it left us attendees with plenty to think about and perhaps a sense of urgency to address these challenges head-on.

The Rise of Automated City Shuttles

ACS offer many urban mobility benefits as they hold the potential to revolutionize public transport and logistics in congested cities, offering on-demand, low-speed solutions for up to sixteen passengers.

A real-world pilot program was highlighted (part of Switzerland’s initiative), including regulatory moves. It was more like a Swiss ordinance that allows for highway autopilot activation (SAE L3), automated parking, and driverless (SAE L4) vehicles, albeit with remote monitoring requirements.

Core Technologies in ACS

Having discussed the practical rollout and regulatory landscape of automated city shuttles, the speaker then briefly explained the underlying innovations that make them possible. This involves examining the suite of sensors, perception modules, and communication mechanisms that power these next-generation urban mobility solutions.

Sensors and Perception:

- Radar for adaptive cruise control and blind-spot detection.

- LiDAR for generating 3D maps of the environment.

- Cameras for lane detection, traffic signals, and object recognition.

- Infrared for low-visibility pedestrian detection and emergency braking.

- Ultrasound for close-range detection (e.g., parking).

- GNSS (GPS) for positioning and route planning.

Communications:

Vehicle-to-Infrastructure (V2I), Vehicle-to-Vehicle (V2V), and broader data exchange are critical for connected, automated functionality.

Cybersecurity Threats and Vulnerabilities

She then pursued with the main question we may all have: what are the threats and vulnerabilities. Although ACS technology has existed in some form since 2016, many of its vulnerabilities—spoofing, jamming, or tampering with GNSS, LiDAR, cameras, and other systems—are not widely publicized.

She highlighted the fact that penetration tests and risk assessments show that malicious actors could target sensors, communication protocols (e.g. rogue base stations, GNSS spoofing), or system-level software.

She summarized the results of those testings:

- GNSS spoofing often proved ineffective on vehicle operation because of built-in validation or encryption.

- GNSS jamming could force the vehicle to halt, demonstrating a potentially disruptive attack vector.

- Data flows among various service providers and roadside units remain a concern if poorly secured.

Privacy Concerns

She then raised very important issues. Basically, ACSs are collecting tons of data – footage, sensor info, location tracking, all from cameras and microphones, often run by private companies.

This raises concerns about potential misuse, like real-time surveillance, stalking, or even figuring out people’s habits from their location. Plus, it’s unclear who all has access to this data and how it’s being shared.

She also said that we’re also running into regulatory issues since privacy laws haven’t kept up with the technology, which leads to questions about who’s responsible if there’s a data breach and whether people can actually choose to not have their data used.

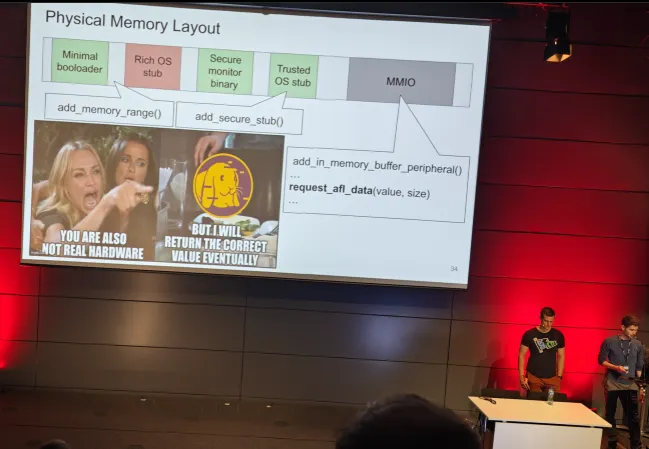

4️⃣ EL3XIR: Fuzzing COTS Secure Monitors

Speakers: Marcel Busch & Christian Lindenmeier

TL;DR

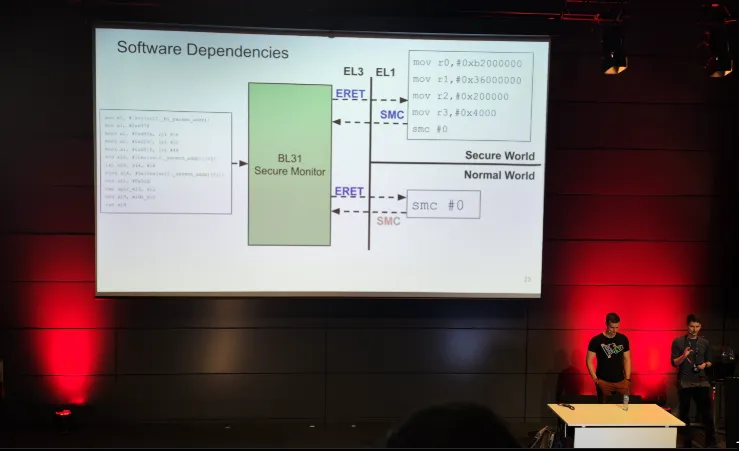

In this talk, the speakers introduced EL3XIR, a new framework for rehosting and fuzzing the secure monitor (EL3) firmware in ARM TrustZone‐based devices.

The latter is often overlooked in security research. By partially rehosting the monitor firmware (BL31) and simulating hardware/peripherals in QEMU, EL3XIR systematically triggers and detects bugs through coverage‐guided fuzzing.

The result: 34 discovered bugs, 17 of which are security‐critical, leading to multiple CVEs and confirmations from major vendors.

Key Takeaways

- Secure monitor fuzzing is achievable with careful partial rehosting and selective hardware emulation.

- Simplifying the environment, by removing unnecessary Trusted OS or kernel functionality—enables faster and more targeted bug discovery in the sensitive EL3 firmware.

- The talk underscores the importance of fuzzing every link in the security chain, including the privileged layers like the secure monitor.

Overall, the presentation demonstrated how EL3XIR extends fuzzing to proprietary secure monitor firmware, illuminating a less‐explored attack surface in mobile and embedded systems. Through rehosting and targeted emulation, the speakers showed that even complex, privileged code can be systematically tested for security‐critical bugs.

Key points & Technical content

The secure monitor (running at EL3 on ARM Cortex‐A processors) plays a crucial role in switching between the Normal World (rich operating system) and the Secure World (Trusted Execution Environment, or TEE).

Because the secure monitor handles sensitive operations, such as switching contexts, handling secure monitor calls (SMCs), and interacting with various hardware peripherals, bugs at this level can compromise an entire system’s integrity.

Their motivation and challenges

Modern fuzzing workflows, which are typically well established and effective for user-space or kernel-space targets, are not easily adaptable to the highly privileged secure monitor code. This difficulty arises due to several factors.

Secure Monitor’s Privileged Nature: The secure monitor operates in a highly privileged environment, often with direct access to hardware and sensitive system states. Fuzzing in such an environment requires careful consideration to avoid unintended consequences, such as system crashes or security breaches.

Hardware Dependencies: Secure monitor code often interacts closely with hardware components, requiring real hardware for accurate testing. However, using real hardware for fuzzing introduces challenges like repeatable resets, maintaining deterministic state, and obtaining fast coverage feedback.

Complex SMC Interactions: Secure monitors frequently use complex System Management Controller (SMC) interactions for communication and control. Off-the-shelf emulators like QEMU lack native support for these intricate SMC interactions, making it difficult to accurately emulate the secure monitor’s behavior and hindering effective fuzzing.

These challenges collectively impede the systematic fuzzing of secure monitor code, making it more difficult to discover and address potential vulnerabilities.

Their approach

EL3XIR focuses on a partial rehosting approach, extracting only the secure monitor binary (BL31 in many ARM‐based systems) and stubbing out or simulating the rest of the software stack and hardware dependencies.

Minimal “stub” implementations replace Bootloader (BL1/BL2) and Trusted OS (BL32) so that the secure monitor code can be driven in an emulator (QEMU + Avatar2) without requiring the full device or OS.

Peripheral interactions (e.g., MMIO accesses) are replaced with lightweight emulation, returning plausible data or simulating device responses for coverage feedback and bug triggering.

Implementation and tooling

The speakers explained how a coverage-guided fuzzer, such as AFL or AFL++, can be used with QEMU’s emulation to systematically generate inputs (SMCs) to test the secure monitor’s functionality.

They discussed techniques, such as injecting or faking hardware responses, for overcoming hardware-dependent code paths. Additionally, they showed how to snapshot and reset the monitor state to enable repeatable fuzz cycles.

Their results

EL3XIR was applied to seven different targets from six vendors, including both open-source and closed-source options. This process uncovered 34 total bugs, of which 17 were considered security-relevant.

As a result, six CVEs were issued, and 14 bugs were confirmed by vendors such as Samsung, AMD, Intel, Huawei, and NXP. These findings highlight the real-world impact of fuzzing this often under-analyzed security layer.

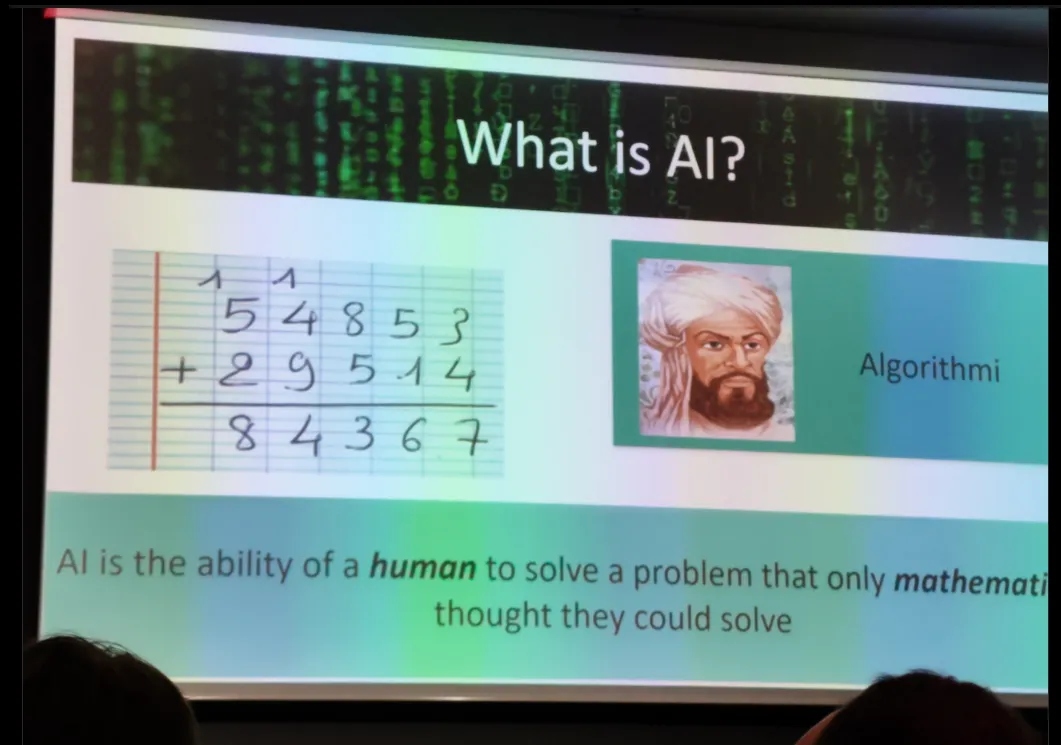

5️⃣ The AI Paradox

Speaker: Rachid Guerraoui

TL;DR

This talk was all about how AI is changing and the weird situation we’re in with it being both super powerful and potentially making some big mistakes. The speaker starts way back with people like Pascal and Turing, then jumps to cool stuff like DeepBlue beating chess and AlphaGo mastering that game.

Over the time, we then see AI getting faster and smarter with things like machine learning, and then, ChatGPT appeared. Yet, even though ChatGPT’s really interesting, it also kind of makes stuff up sometimes.

The speaker uses this image of AI starting out like performing animals and then becoming more like pets we live with. But the main thing is this tug-of-war between AI being really good at doing things quickly and accurately, and also the risk that its mistakes could be massive.

The presentation ends by wondering if AI can even check itself, which is a big question about making sure it’s safe and that we’re still in control.

Keys points & Technical content

Historical context

The idea that as AI becomes more capable, questions of reliability, control, and safety grow more urgent. The speaker started with some historical context:

- Blaise Pascal: Early mechanical calculators.

- Alan Turing (1930s): Theoretical foundations of computation and the notion that some problems (e.g., the Halting Problem) cannot be definitively solved by algorithms.

- Milestones in AI:

DeepBlue (1997): Famous for defeating a world chess champion (mentioned in relation to Bobby Fischer’s influence).

Jeopardy! (2011): IBM’s Watson system.

Rembrandt (2016): An example of generative AI creating new art in the style of Rembrandt.

Go (2017): AlphaGo’s victory over professional Go players, demonstrating AI’s “intuitive” leaps via self-learning.

Why Now?

AI has progressed rapidly because of powerful algorithms, the ability to network large amounts of data, and breakthroughs in machine learning and creative generative models.

Tools like ChatGPT illustrate AI’s creative potential however, they show unreliability (hallucinations, inaccuracies, etc.).

From “Circus Animals” to “Pets”

The speaker uses a metaphor to describe AI’s evolution: moving from “circus animals,” where AI systems perform impressive but narrow feats (like winning at chess or Go), to “pets,” implying AI systems that are more domesticated, useful, and obedient in everyday contexts.

The AI Paradox

On one hand, AI is faster and makes fewer mistakes than humans for certain tasks. On the other hand, AI can make mistakes on a larger scale, and it can do so very quickly, raising concerns about safety, reliability, and oversight.

What Now?

The presentation closes with the question: “Can AI verify AI?” referring to the challenge of ensuring AI’s correctness and trustworthiness.

This highlights the ongoing dilemma: balancing performance gains with robust safeguards so that AI remains both powerful and under human control.

Overall, the presentation underscores the promise of AI’s growing capabilities alongside the critical need to address safety, reliability, and ethical considerations—embodying the central paradox of pushing performance without compromising control.

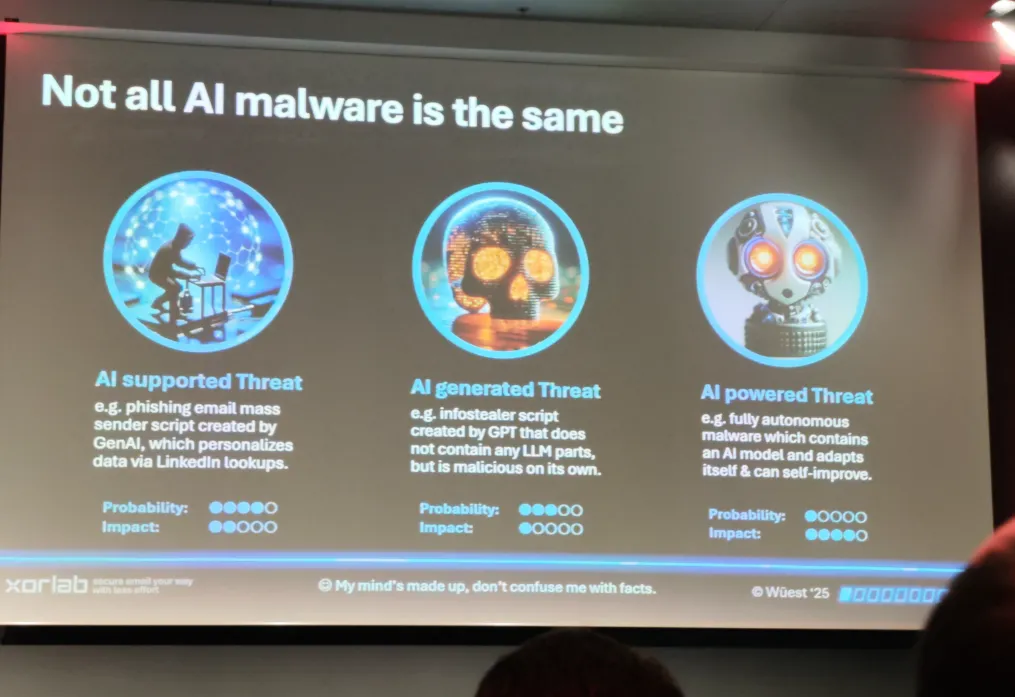

6️⃣ The Rise of AI-Driven Malware:

Threats, Myths and Defenses

Speaker: Candid Wuest

TL;DR

This talk explores how AI is transforming malware creation and evasion tactics… From script obfuscation to autonomous attack, while emphasizing that robust and layered defenses remain effective against these evolving threats.

The speaker put emphasis on the fact that malware is increasingly “AI-supported,” but fully autonomous AI-driven threats remain rare.

Classic techniques like script obfuscation and polymorphism still work and AI can make them easier. AI can help craft malware and automate attacks, but it isn’t a magic one-click solution. Security fundamentals (behavior-based detection, network monitoring, layered defenses) remain effective.

Keys points & Technical content

AI-Driven vs. AI-Supported Threats

The speaker distinguished between AI’s supporting, generated, or fully powered roles in malware:

AI-Supported Threats:

- Human-crafted code with AI aiding tasks like large-scale phishing customization or reconnaissance.

- High probability of occurrence in the wild, as generative AI can quickly personalize attacks.

AI-Generated Threats:

- Malware code generated directly by AI (e.g., ChatGPT).

- While the code itself may not continuously rely on an AI, it can still be malicious and functional.

AI-Powered Threats:

- Rare, fully autonomous malware containing embedded AI models.

- Can adapt on the fly, self-improve, and potentially outsmart static security measures.

- High impact but lower probability due to complexity and resource requirements.

While the degree of AI’s involvement shapes the threat landscape, the speaker next explained how long-standing adversarial techniques like obfuscation and metamorphic approaches remain at the core of evasion tactics, bridging classic malware methods with new AI-assisted capabilities.

Obfuscation and Metamorphic Techniques

Obfuscation isn’t new as tools like PSObf, InvokeStealth, and obfuscator.io have long helped attackers disguise scripts and binaries. AI can automate code changes and encryption, however, the speaker notes that the overuse of obfuscation itself can trigger suspicion.

A key point that was talked about here was that behavior-based detection remains crucial: If the code’s actions are malicious, advanced hiding only slows, but doesn’t always stop, skilled defenders.

But then we also have so-called polymorphic and metamorphic malware that go a step further by continually morphing the payload. Examples like BlackMamba or LLMorph III show how AI models can re-generate code or encryption keys for each infection, frustrating static signature detection.

The main challenges for attackers are then each new version might be unstable or buggy unless thoroughly tested.

On the opposite side, the defender looks for repeated outbound traffic and suspicious loaders, as even mutated malware has to execute and communicate.

While obfuscation and metamorphic methods remain core evasion tactics, the presentation then shifted to how AI increasingly fuels offensive security strategies, from automated vulnerability discovery to AI-driven exploitation.

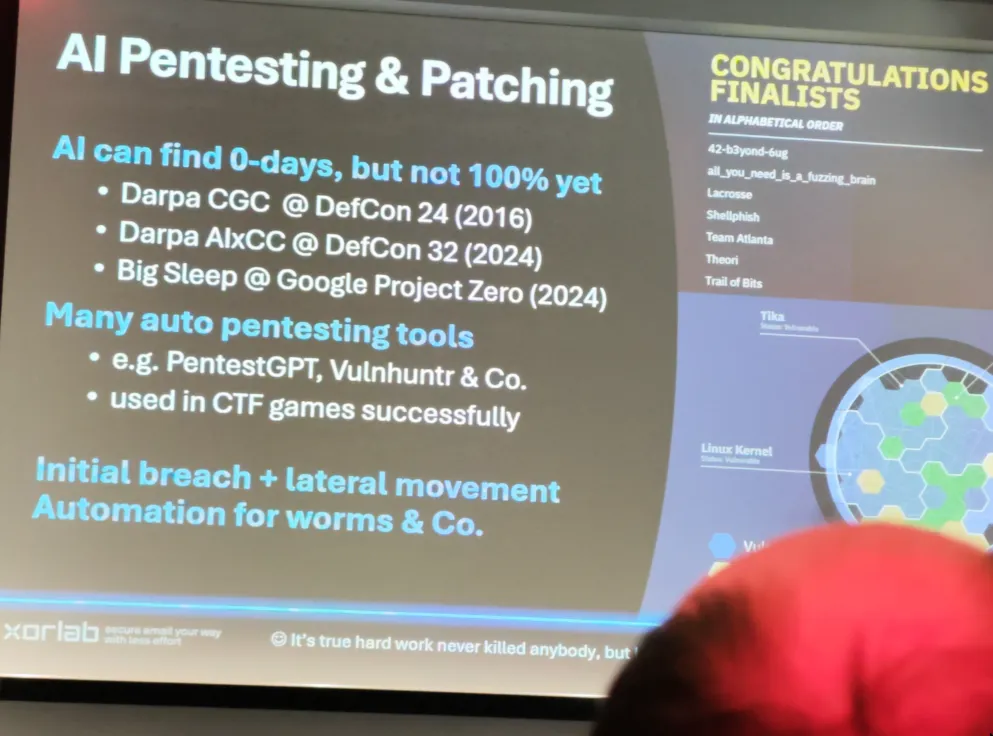

AI in Offensive Security

The presentation covered ways AI is reshaping pentesting and vulnerability research:

- Automated Pentesting Tools (e.g., PentestGPT, Vulnhuntr) can quickly scan systems, search for known exploits, and even discover zero-day vulnerabilities.

- Competitions like DARPA Cyber Grand Challenge and DARPA AIxCC emphasize autonomous systems discovering and patching vulnerabilities.

- AI’s role in lateral movement and automated exploitation is growing, hinting at possible self-spreading worms or advanced persistent threats relying heavily on AI logic.

After highlighting AI’s offensive potential in pentesting and attacks, the speaker shared proof-of-concept findings that illustrate both the capabilities and limits of AI-driven malware.

Proof-of-Concept (PoC) Insights

From the PoC experiences shared:

- Prompt engineering is key as AI needs strict guardrails; otherwise, it might attempt to download external scripts or produce unintended functionalities.

- Code quality & verification is very important as AI-generated code can be around “70% correct,” so it requires human oversight.

- Hijacking or installing an AI “agent” can streamline repeated tasks like downloading, testing, and executing malicious code.