Side-channel attacks: Why performance matters?

The notion of security is not absolute but always relates to a threat context. For instance, the widely used Common Criteria security evaluation scheme, gives the assurance that an attacker with a given power level may or may not compromise a secret asset. If the evaluation is positive (i.e., the target is considered secure), an attacker with more time or means may be able to find a weakness.

The Application of Attack Potential to Smartcards and Similar Devices of the Joint Interpretation Library, called JIL rating JIL2019 is widely used to rate physical attacks and gives a clear definition of the attacker level. The knowledge of the TOE (Target of Evaluation), the time spent, the material and the expertise level are considered. But what about the side-channel attack tools?

Indeed, the performance of attack tools is a key point, especially for side-channel as it may greatly affect the time criterion. For the two main steps of a side-channel analysis, namely the acquisition and the attack, performance matters.

A side-channel example

Imagine two security labs:

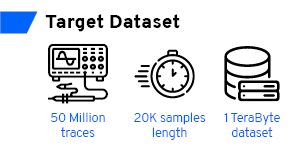

- The first one uses a cutting-edge tool. The expert can acquire, align and attack 50 million traces, which represent 1TB of data, within two weeks. The analysis covers tens of intermediate data, with various ten different leakage models and covers first and second order attacks.

- The second, within these two weeks, can collect and analyse only 2 million traces. The analyses cover only the first order Hamming weight leakages.

Is it fair to say that these two evaluations provide the same security assurance level?

But is this example relevant? Who collects 50 million traces to assess the side-channel security of a component?!

It may not be relevant for a smart-card. But for a modern SoC that performs firmware decryptions, it is not extravagant. At 1GHz, a thousand AES can be executed under 50µs. And 50 millions of secure AES are executed within 30 seconds.

Using the full capabilities of a high-end oscilloscope, it is possible to acquire more than 500 AES executions per second. Thus, it remains totally realistic to acquire 50 million AES executions within 24 hours.

And again, it is not extravagant. For instance, during one of my last tests, the first leakages appeared only after analysing more than 10 million traces.

What are "good performances"?

But what good performance means for side-channel attacks? Few years ago, when eShard open sourced its side-channel analysis library scared (eShard scared blogpost), it was one of the fastest tools for side-channel attacks (tell us what you think about scared). scared was benchmarked against other open source libraries or with public information about commercial tools. However, most of the commercial software for side-channel attacks are closed source and there is no specific information about the performances.

Since I started working on eShard’s esDynamic program (a commercial platform based on the scared library), I had a goal: to perform an attack on 1TB of data (20K samples, 50M traces) within one hour. Few years later, I needed to be realistic: this is not achievable.

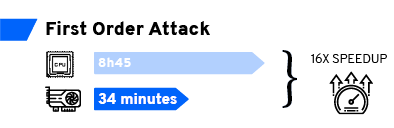

Despite optimization efforts, by widely using efficient matrix operations to leverage multi core architectures a classical attack on this 1TB dataset remains too long. By a classical attack I mean a CPA on an AES, targeting the Hamming weight leakage after the SubBytes operation. On our server, with 2x CPU E5-2650 v4 @ 2.40GHz, this attack takes at least 8 hours and 45 minutes. For a known key characterization, i.e. without key guesses, the analysis requires at least an hour and a half.

One step beyond 🎼

This is why we are working on more advanced approaches to improve analysis performances: GPU and distributed computing.

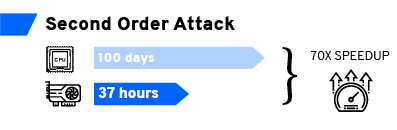

For first order attacks, a consumer-grade GTX 1080Ti GPU gives a 16x speedup compared to CPU implementation. Then, the previously mentioned attack on 1TB of data, targeting the Hamming weight of the SubByte output is doable in about 34 minutes. For second order attacks, the speedup can get as high as 70x.

It is well known that the main bottleneck for GPU computing is the quantity of memory. A second order attack on 1400 samples leads to 16.1GB results (with a float32 precision). Which is too big for most of the available GPUs.

That is when distribution computing comes in/comes into play. With a dedicated framework, a second order attack can be split into several parts, matching the GPU memory size.

And if you have multiple GPUs available, in the same machine or within a computing farm, attacks can be performed in parallel.

Leveraging these new tools, things that seem unrealistic become achievable. Indeed, with our 50M traces dataset, attacking 1500 samples with the centered product second order technique requires about 100 days of CPU processing (with our implementation on our server). Leveraging GPU computing, this processing time can be reduced to less than 37h. If distributed computing is also leveraged, this time can easily go down to less than a day. Thus, the combination of GPU and distributed computing can reduce the execution of some side-channel attacks from 100 days to less than one day.

Of course, the techniques to improve classical side-channel attacks performance, namely GPU and distributed computing, are also valuable for deep learning side-channel. Indeed, side-channel using machine learning requires a lot of computational power to tune the parameters and then maximise the chance of success. This process can be widely distributed across several GPUs.

I hope that is only a beginning for these new tools in esDynamic and some improvements will come quickly to reach a new step of performance improvement.